X = Y

From Mondothèque

Revision as of 16:19, 9 January 2016 by Dickreckard (talk | contribs)

Contents

0. innovation of the same

INCOMPLETE DRAFT

This stance is not limited to images: the main discourse that shapes the exhibitions that happen in Mundaneum basically maintains that Internet / Google (leaving aside for now the issue of the interchangeability of Google and Internet ) is keeping alive the utopia of Paul Otlet, who unfortunately at his time didn't have access to the adequate technology to realize it.

Even though there are obvious links and similarities between the two projects, one cannot put aside as details the fact that Otlet was an internationalist, a socialist, an utopian whose project was not profit oriented, and most importantly, that he was living in the temporal and cultural context of modernism in the beginning of the century.

Such construction of identities and continuities are in fact detaching something from a specific historical frame, ignoring the different scientifical, social and political milieus that Otlet's Mundaneum were part of. This means that such continuities exclude discording or disturbing elements that are inevitable when one considers such a complex figure in its entirety.

This is not surprising, seen the focus on the information technology: this type of a-historical perspectives are something really common in Silicon Valley culture.

For example, everybody is familiar with the tendency of describe new IT products as groundbreaking, innovative and 'different from anything seen before'. There is in other situations, instead, the complementary habit to maintain that something is 'exactly the same' as something else that already existed (Golumbia). While difference is used to surprise and wonder, sameness is there instead to reassure and comfort. For example Google Glass was marketed as revolutionary and innovative, but when it was attacked for its blatant privacy issues, it was described as just a camera and a phone joined together.

This sort of a-historical attitude pervades the discourse of many techno-capitalists, drawing a cartoonesque view of the past, punctuated by great men and great inventions. The Internet becomes then the invention of a few father/genius figures, rather than a long complex interaction of diverging efforts and interests of academics, entrepreneurs, companies, universities.

To sum up a simplified narrative: on one hand, technological advancements are gonna completely redesign the way we live, so we have to be ready to give up our old fashioned ideas of life and culture when the new comes. On the other hand, that's something not to worry about, after all that's how society has always evolved, and undoubtedly for the better.

For each groundbreaking new invention that is questioned, there is always a previous invention that was aiming for the same ideal, with just as many detractors... Great minds think alike, after all.

This instrumental use of history is consistent with much of the theoretical ground on which the Californian Ideology stands, whose conception of history is pervaded by various strains of technological determinism ( from Marshall McLuhan to Alvin Toffler ) and capitalist individualism ( either in generic neoliberal terms, or the fervent objectivism a la Ayn Rand ).

With the intention to return Otlet's figure to some of its historical complexity, a

Instead of trying to dispel the advertised continuity of the 'Google of paper',

It can be interesting, instead, to play "exactly the same" game ourselves, but choosing to focus on other types of samenesses in the story, which make the story more complicated and more telling about the present.

Here following are three such 'samilarities', which look at three aspects of continuity between the documentation theories and the archival experiments Otlet was involved in, and the cybernetic theories and practices that Google is an exponent of, in its capitalist enterprise. First is a look at the relation between human and machine that is structured by the two assemblages, in particular the role of cognition workers, always necessary for information structures to work, and which often tends to be forgotten.

Then an account the elements of distribution and control that are in the idea of a 'reseau mundaneum', and the implicit interaction with other types of existing infrastructures, and that echo the functioning of datacenters.

Finally there is the common idea of an underlying formula or algorithm that has the promise of reading the world.

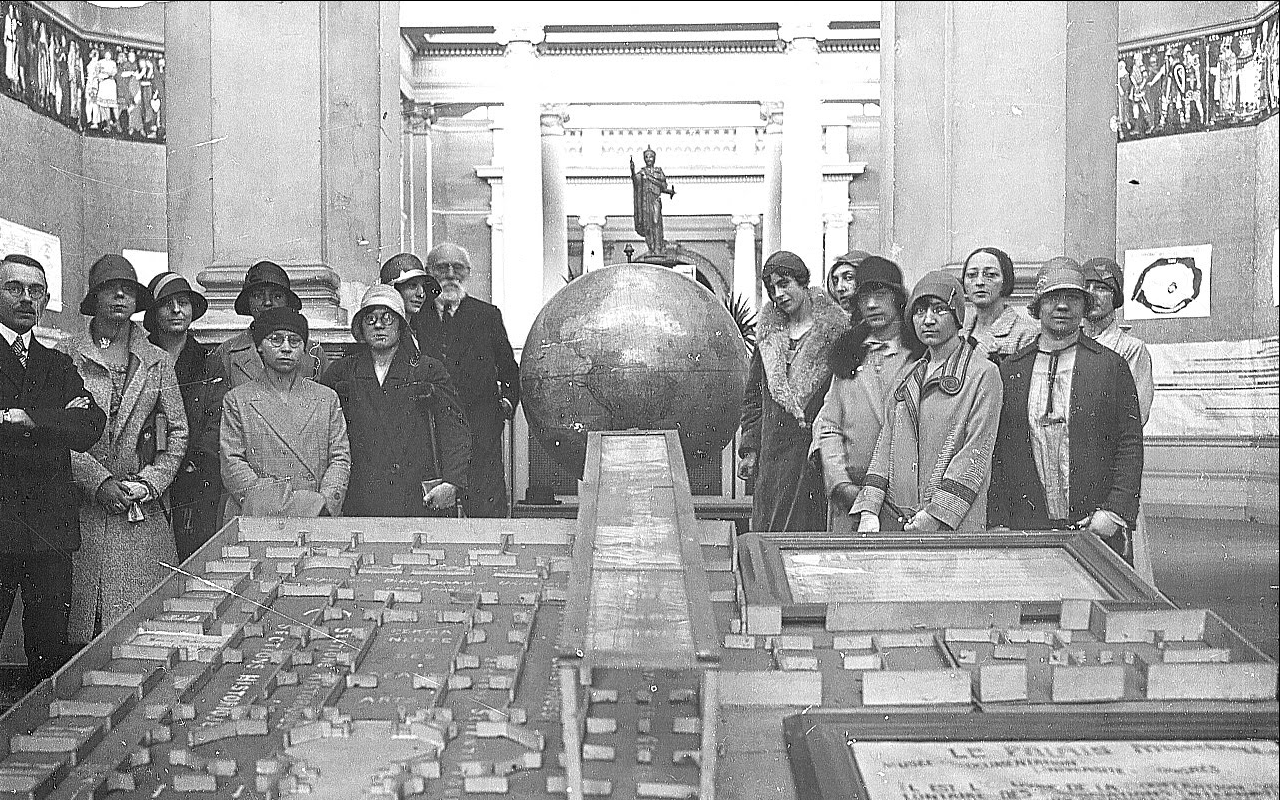

In a picture from xxxx you can see Paul Otlet together with the rest of the equipe of the Mundaneum. A very nice group picture, which also tells us something that is not that present in the written archive: Who else worked at the Palais Mondial, except Otlet and Lafontaine?

These mostly unidentified women were the workforce that kept the archival machine running. The huge amounts of cards needed to be written, distributed, located in the tiroirs continuously, and obviously it was not possible for Otlet himself to make all the work. Similarly to phone operators or the early computer operators, female workers were often hired for repetitive works that required specific knowledge and precision. As even the brightest women would have a hard time to be able to design systems let alone directing them (and still do in most of the world).

These mostly unidentified women were the workforce that kept the archival machine running. The huge amounts of cards needed to be written, distributed, located in the tiroirs continuously, and obviously it was not possible for Otlet himself to make all the work. Similarly to phone operators or the early computer operators, female workers were often hired for repetitive works that required specific knowledge and precision. As even the brightest women would have a hard time to be able to design systems let alone directing them (and still do in most of the world).

In a drawing called Laboratorium Mundaneum, the project is depicted as a huge factory, processing books and documentation into end products rolled out via a UDC locomotive. In fact, like a factory, Mundaneum was highly dependent on infrastructures and the bureaucratic and logistic modes of organizing labour deriving from mass-production.

Here a pattern of similarity emerges, still very present nowadays: all magic repetitive machinic tasks that 'technique' can do for us are based on human labour. In fact, differently from the industrial production where the worker has been usually given its place in history as a figure, mostly thanks to the workers movements and struggles, the so-called cognitive worker is mostly hidden or under-represented.

Hundred years after Mundaneum's unidentified women, automation didn't really free us from labour. Computational linguistics, neural networks, optical character recognition, all the most amazing machinic performances that are are actually based on huge amounts of humans performing repetitive cognitive operations that the software will 'learn from'.

Mechanical turks, content verifiers, annotators of all kinds... Which, unless it is possible to outsource to foreign english speaking countries with lower wages, like India, in the western world follow this pattern: female, lower income, ethnical minorities.

Like all other informatic giants, Google has its own share of invisible workers, one interesting case of which are the Scanops, as Artist Andrew Norman Wilson called the workforce he discovered during his artistic residency at Google office in Mountain View. http://www.andrewnormanwilson.com/WorkersGoogleplex.html

One set of the Google workers, owning a different type of badge, are isolated in one part of the complex and secluded from the rest of the workers, by having access to just one facility and by their time schedules being made so. The task of these workers consists in scanning the pages of printed books to be added in the Google Books database, a work that is still more convenient to do by hand in some cases (delicate or rare books, for example).In prevalence female, in prevalence ethnic minorities these workers are secluded from the actual glorified workers of the company, the programmers, revealing a will to hide what the machine can't do 'yet'.

The traces of this workforce are still traceable through the result of its work, though, as seen in the following project by Andrew Wilson. http://www.andrewnormanwilson.com/ScanOps.html

It is somehow reassuring that, when looking with the right eyes, the final product keeps in some ways the traces of the workforce involved, that even with the progressing removal of human signs from a technical infrastructure, this role can never be hidden completely.

In the very same way, there are many traces of human work in the mundaneum archive... From the inside pictures of the Palais Mondial ( differently from the Google Books working place, of which no image exist yet ), or from the elements one can find in the cards themselves.

http://www.mondotheque.be/wiki/index.php/File:Archives_MundaneumDSC04638.jpg fill in the gap. the archive is full of traces of unnamed workers.

[...]

—

b. centralization - distribution - infrastructure

In 2013, in the days in which prime minister Di Rupo was celebrating the beginning of the second phase of construction of the datacenter, a few hundred kilometers away something very similar to what happened few years before in Mons was unrolling. In the small town of Eemshaven, a port in the province of Groningen, the Netherlands, Groningen Sea Ports and NOM development were in secret deals with another[crystal comp] temporary named firm, "Saturn", to deploy a datacenter in the infrastructural wonder of Eemshaven. When many months later, the party was revealed to be Google, Harm Post, director of Groningen Sea Ports, commented: "Just ten years ago Eemshaven was the standing joke of the ports, a case to look down upon of industrial development in the Netherlands, the planning failure of the last century. And now Google is building a very large data center here, which is "pure advertisement" for Eemshaven and the data port." Again, further details on taxes were not disclosed, and the final number of jobs is considered to be around 150.

Another region had the fantastic luck, to be chosen by Google, just like Mons, but why there, actually? Well, datacenters necessarily need to interact with existing infrastructures and flows of various type. Mainly, there are three necessities: being near a massive source of electrical power (better if green, in long-term thinking); being near a source of clean water, for the massive coooling needs; and thirdly being near internet infrastructure that can assure proper connectivity. There is then a whole set of other non technical elements, that we can describe as the social, economical and political "climate", that proved favorable in Mons and Eemshaven.

This fundamental importance of infrastructures can be traced back to the logistic organization of life first implemented with the industrial revolution, and theorized with modernism. Again, some suggestions of something quite 'exactly the same' come from the history of Paul Otlet.

After the closedown of Mundaneum in 1934, Otlet got involved in the competition for the development of the Left Bank in Antwerp, attempting to build a new Mundaneum as part of a 'Cite Mondiale'. The most famous modernist urbanists of the time were invited plan the development from zero the left side of the river, at the time completely unbuilt. Otlet lobbied for the insertion of a Mundaneum in the projects, stressing how it would create hundreds of jobs for the region. He also flattered the flemish pride by stressing how Antwerp inhabitants, often more hard working than the Bruxelloises, would finally obtain their deserved recognition, hightening their city to a World City status. He partly succeeded in his propaganda, seen the fact that apart from his own proposal developed in collaboration with Le Corbusier, many other participants included Otlet's Mundaneum as a key facility in their plans. In these proposals for new development, Otlet's archival infrastructure was shown in interaction with the existing flows already running thorugh the city, like industrial docks, factories, the railway and the newly constructed stockmarket. The modernist utopia of the planned living environment already implied the organization of culture and knowledge with the same approach that was used for coal or electricity.

While the plan for Antwerp was in the end rejected in favour of more traditional housing development, 80 years later, the same relation between existing infrastructural flows and the logistics of documentation storage is highlighted by the grand realization in Eemshaven. The continous construction of new datacenters is a result from the ideological propaganda that equals computation with immediacy, unlimited storage and exponential growth. But it also finds its roots in the logistic organization proper of modernism. The key ideas of centralization, distribution and control were present in Otlet already. The centralization, which was seen as the most efficient way to organize content, also in view of international connection, already generated problems of space back then. The Mundaneum database at its peak reached 16 million entries, occupying around 150 rooms.. This was a big incentive towards the insertion of the archive in newly planned city structures that would better facilitate centralization and distribution of content. In his exchanges with Patrick Geddes[Van Acker], they imagined 'the White Link', a network to distribute heliographies in copies throughout a network of Mundaneums. Thanks to this, the same piece of information would be produced and logistically distributed. It is clear then the technical importance for the Mundaneums to be next to a railway station, as well as to the radio station, when thinking about the sketches for the Mondotheque.

This key inter-relation between centralization, distribution and infrastructures is very similar with nowadays datacenters.

Google has been in the last 10 years pursuing a policy of end-to-end connection between its datacenters and its end-user interfaces.

From 2005 it started buying thousands and thousands miles of unused fiber networks in the United States, assuring autonomous links between its datacenters, and becoming one of the main proprietor of physical networks in the world. More recently, it also entered the business of underwater sea cables, financing 3 different cables between US and Japan, and between us and the South East Asia.

This control over infrastructure, combined with the strategical distribution of its computing power in new datacenters, or, as the engineering department calls them, 'warehouse-sized computers', is fundamental for Mountain View's future plans: the cloud services that Google started to deploy, planning to threaten Amazon monopoly in the field. Own cabling and geographic placement of servers makes a lot of difference in the cloud computing field, allowing to achieve lower latencies and more stable service.

—

025.45UDC; 161.225.22; 004.659GOO:004.021PAG.

The Universal Decimal Classification system, developed by Otlet and Lafontaine on the basis of the Dewey Decimal Classification system, is still considered one of the most important realizations of the two men, as well as a corner stone in Otlet's overall vision. Its adoption, revision and use until present day demonstrates a thoughtful and successful approach to the open issue of the classification of knowledge.

The Decimal part in the name means that any records can be further subdivided by tenths, virtually infinitely, according to an evolving scheme of depth and specialization. For example, 1 is “Philosophy”, 16 is “Logic”, 161 is “Fundamentals of Logic”, 161.2 is “Statements”, 161.22 is “Type of Statements”, 161.225 is “Real and ideal judgements”, 161.225.2 is “Ideal Judgements” and 161.225.22 is “Statements on equality, similarity and dissimilarity”.

The UDC though, differently from Dewey and other bibliographic systems, had the potential to exceed the function of ordering alone. The complex notation system could classify phrases and thoughts in the same way as it would classify a book, going well beyond the mere function of classification, becoming a real language. One could in fact express whole sentences and statements in UDC format.* The fundamental idea, described by Otlet with the word “depouillement”, was that books and documentation could be broken in their constitutive sentences and boiled down to a basic set of meanings, regulated by the decimal system.

This would allow to express universal concepts in a numerical language, fostering international exchange beyond translation, making science's work easier by regulating knowledge with numbers.

One has to set this idea into its time, shaped by positivism and the belief in the unhindered potential of science to obtain objective universal knowledge in all fields. Especially taking into account the arbitrariness of the decimal structure, this today sounds doubtful, if not preposterous.

?This duality, has been both the strength and the weakness of UDC.?*?

This linguistico-numeric element of UDC, enabling to express fundamental meanings by numbers, plays a key role though, in the project of Paul Otlet. What one is brought to think by taking into account Otlet's overall path, is that numerical knowledge would be the first step towards a science of combination of these basic sentences to produce new meaning in a systematic way.

When one looks at Le Monde, Otlet's work from 1935, the continous reference to multiple algebraic formulas* that describe how the world is composed, suggest that one could “solve” such equations, to modify the world accordingly.

As a complementary part to Le Traite de Documentation, which was describing the systematic classification of knowledge, Le Monde was setting the basis to the transformation of this knowledge into new meaning.

Otlet wasn't the first to envision an idea of an 'algebra of thought', it has been in fact a recurring topos of modern philosophy, under the influence of scientific positivism and in concurrence with the development of mathematics and physics. Even though one could trace it even further to Ramon Llull and even earlier forms of combinatorics, the first sameness in the line of this type of scientific and philosophical challenge was Gottfriend Leibniz.

The german philosopher and mathematician, a precursor of the field of symbolic logic, which was developed later in the 20th century, was researching a method by which statements could be reduced to minimum terms of meaning. He has been famously researching a language which “... will be the greatest instrument of reason,” for “when there are disputes among persons, we can simply say: Let us calculate, without further ado, and see who is right”.*

His inquiry was also divided in two phases. The first one, analitical, the characteristica universalis, was a universal conceptual language to express meanings, of which is only known that it worked with prime numbers. The second one, synthetical, the calculus ratiocinator, was the algebra that would allow operations between the meanings, of which there is even less knowledge.

The idea of calculus was related to the infinitesimal calculus, fundamental development in the field of mathematics that Leibniz conceived, and Newton concurrently developed and popularized.

Even though not much remains of Leibniz's work on this 'algebra of thought', this task was later on taken on by mathematicians and logicians in the 20th century. Most famously, and curiously enough in the same years as Otlet was publishing Traite and Monde, logician Kurt Godel used the same idea of a translation to prime numbers to demonstrate his incompleteness theorem.*

///

The relation between representation, translation and organization of knowledge is one of the open issues that are at work in the field of a web search. At the beginning of the Web, around mid-90s, two main approaches to online search for information emerged: the web directory and web-crawling. Some of the first search engines like Lycos or Yahoo, started with a combination of the two. The web directory consisted in the human classification of websites into categories, done by an “editor”; crawling in the automatic accumulation of material by following links, with different rudimentary techniques to assess the content of a website.* With the exponential growth of web content on the Internet, web directories were soon dropped in favour of the more efficient automatic crawling, which in turn generated at this point so many results that their quality became of key importance. Quality in the sense both of the assessment of the webpage content in relation to keywords, as well as the sorting of results according to their relevance.

Google's hegemony in the field has mainly been obtained with the approach of translating the relevance of a webpage into a numeric quantity according to a formula, the infamous PageRank algorithm.* This value is calculated on the relational importance of the webpage where the word is placed, based on how much other websites links to that page. The classification part is long gone, and linguistic meaning is also structured along automated functions. What is left is reading the network formation in number form, capturing the human opinions diffused in hyperlinks, both about which word links to which webpage, and which webpage is in general more important.

In the same way as UDC systematized documents via a notation format, the systematization of relational importance in numerical format allows for functionality and efficiency.

In this case though, rather than linguistic, the translation is value-based, quantifying network attention independently from meaning. The interaction with the other infamous Google algorithm, Adsense1*, makes so that an economic value is intertwined with the PageRank position.

The influence and profit deriving from how high is a search result placed, mean that the relevance of a word-website relation in Google search results translates to an actual relevance in reality.*

So we could say that even though the task Otlet and Google say that they are after, “organizing knowledge”, is the same, the approaches that are the foundation of the respective projects are at the opposite corners of the field of epistemology.

Taking the foundational analytic/synthetic dychotomy as introduced by Kant, the study of knowledge calls analytic the propositions which are true by virtue of their meaning*, and synthetic the propositions which are true by how their meaning relates to the world*.

UDC is an example of a completely analitical approach, which breaks down existing knowledge in its components. Its propositions could be exemplified with the sentences “Logic is a subdivision of Philosophy”, or “PageRank is an algorithm, part of the Google search engine”.

PageRank instead is an extremely synthetical one, starting from the form of the network, devoid in principle of any intrinsic meaning or truth, making instead a model of its relational truths. Its propositions could be exemplified with “Wikipedia is of utmost relevance”, or “The University of District Columbia is the most relevant meaning of the word 'UDC'”.

Specified this basic difference, a consequent issue is that while the usefulness and influence of a search engine like Google are obviously out of the question, such a structure has become so influential that it produces now its own truths. This raises clear doubts about a structure controlled solely by a corporation, which by definition, and beyond mottos and utopias, has the sole objective of making profits and obeying its stakeholders.